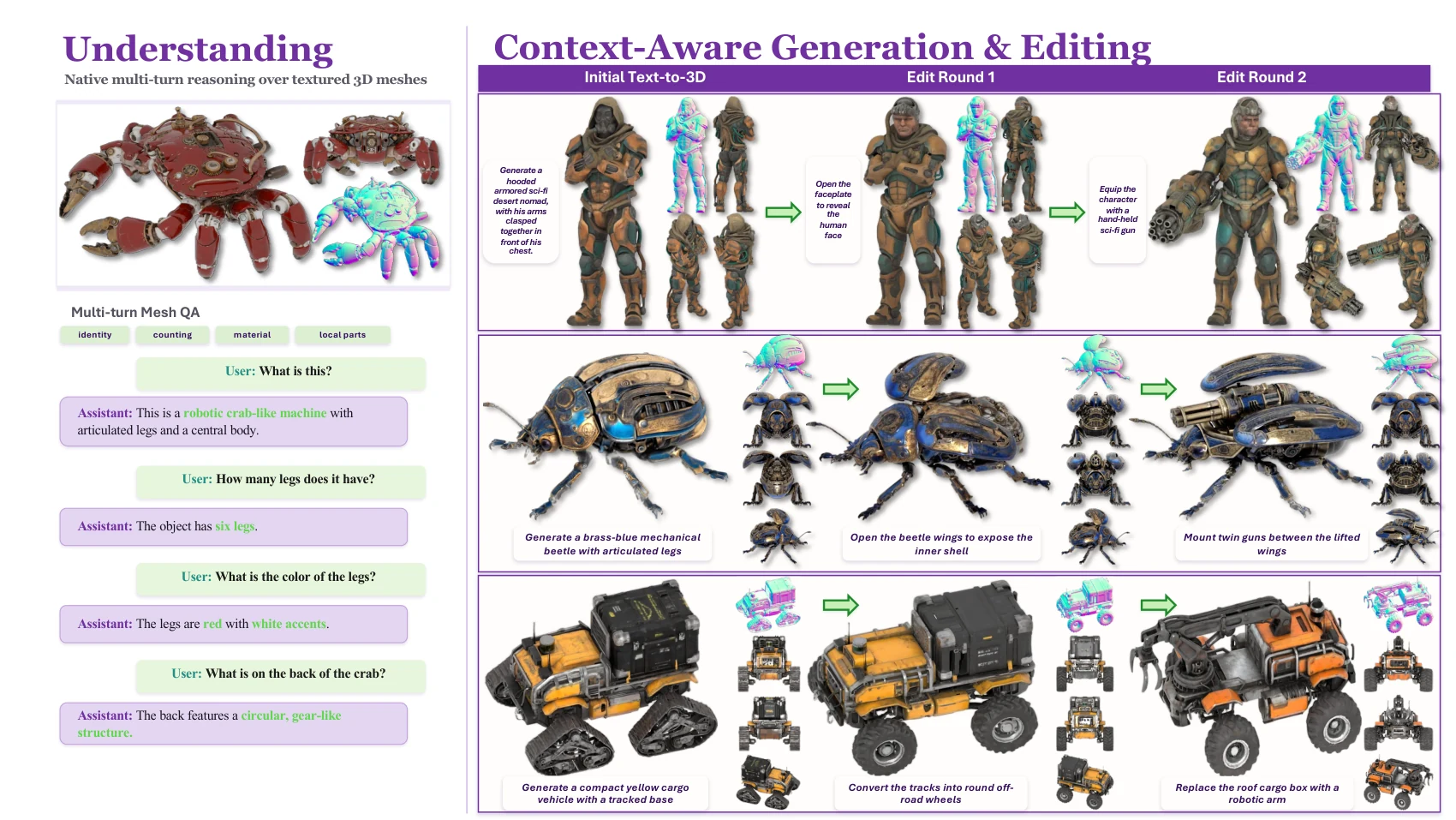

3D Understanding

Answer questions over mesh inputs while preserving the semantic priors of a multimodal backbone.

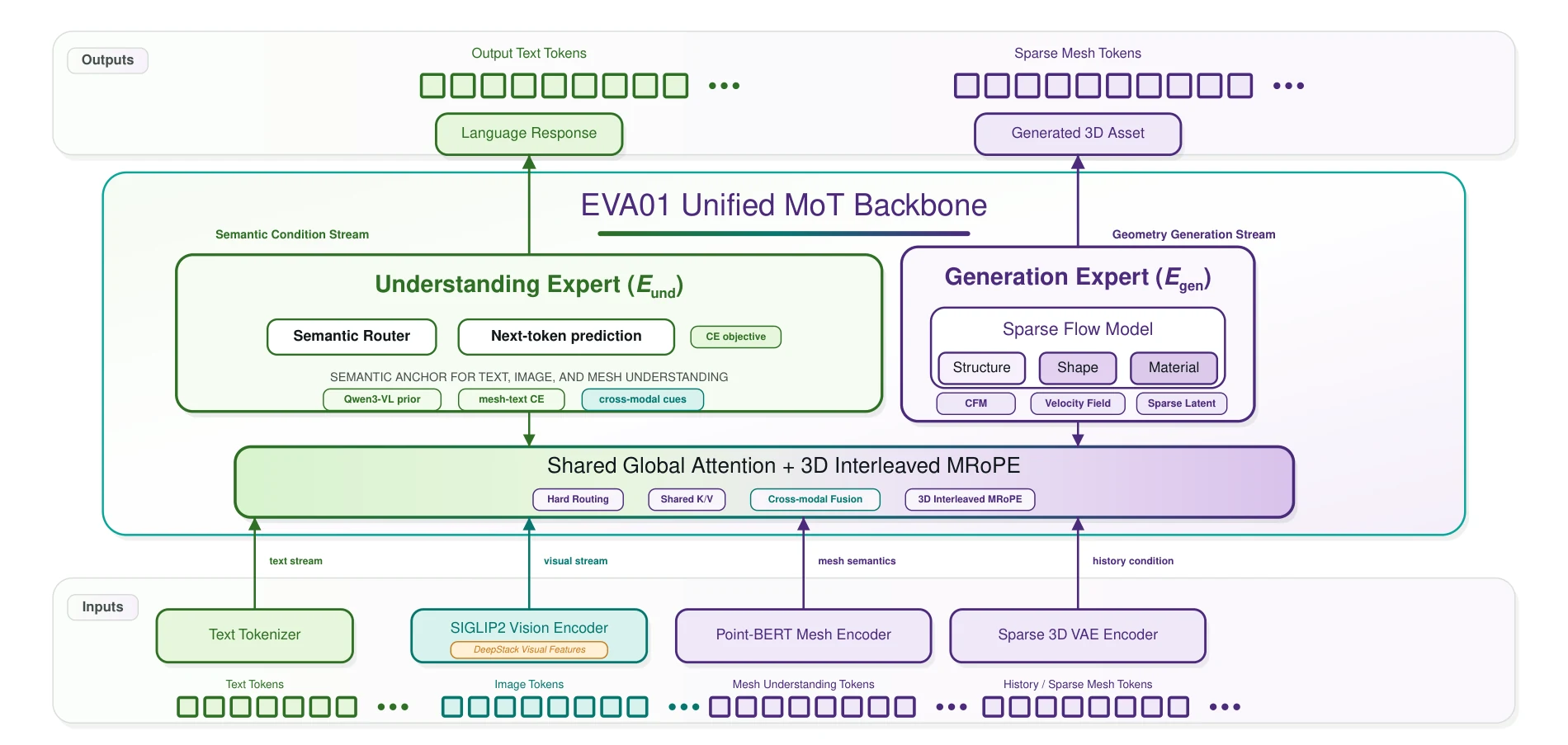

Unified Native 3D Understanding and Generation via Mixture-of-Transformers

EVA01 treats mesh as a native language for multimodal models: understand the object, generate geometry, then keep editing across long context without losing identity.

Capability Loadout

EVA01 extends the modality boundary of MLLMs so 3D is not an external attachment. Geometry becomes part of the sequence, routed through experts that share global context.

Answer questions over mesh inputs while preserving the semantic priors of a multimodal backbone.

Generate native 3D structure from language without treating geometry as a detached post-process.

Apply localized structural edits across turns while keeping object identity inside the same interaction history.

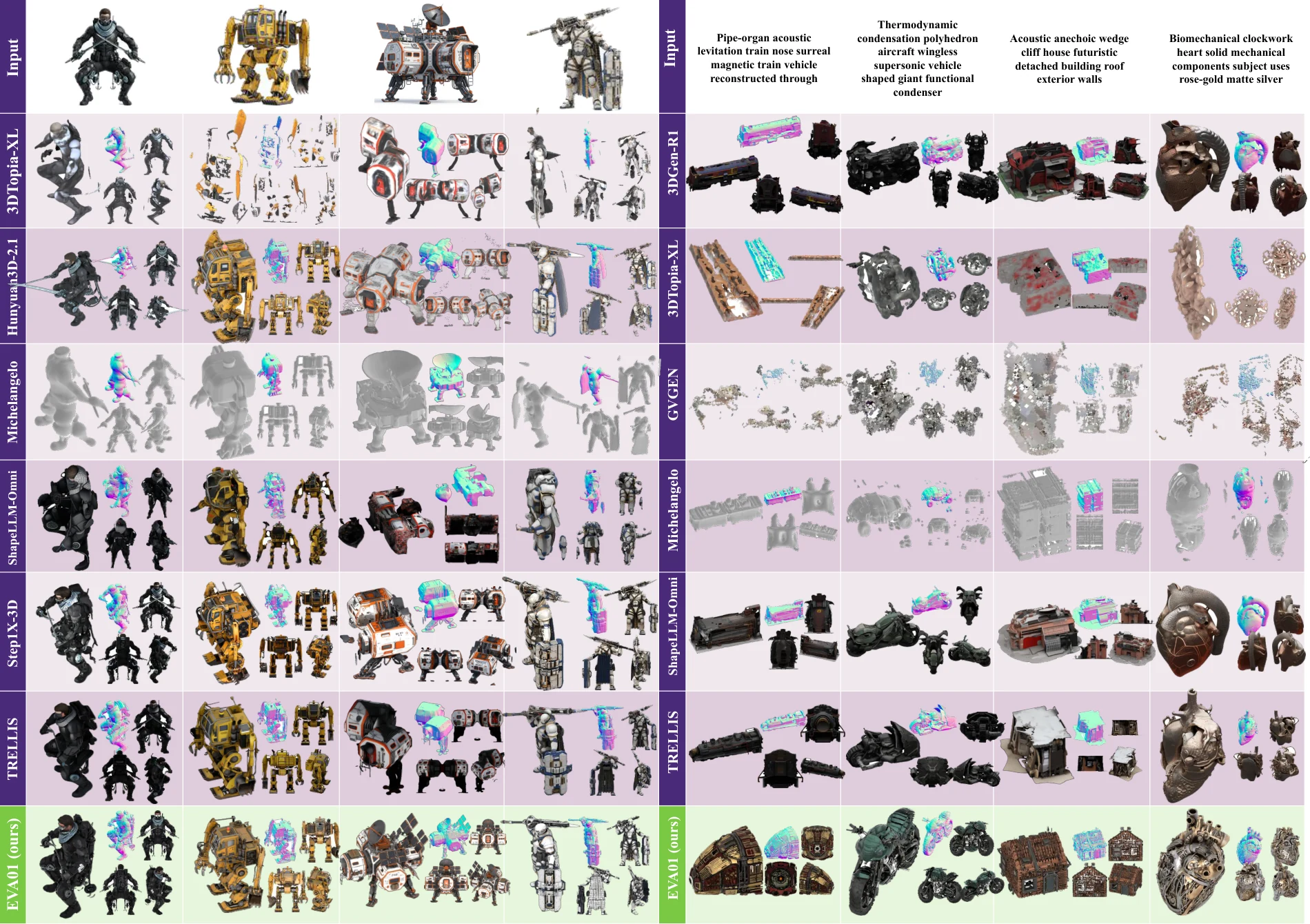

Result Stage

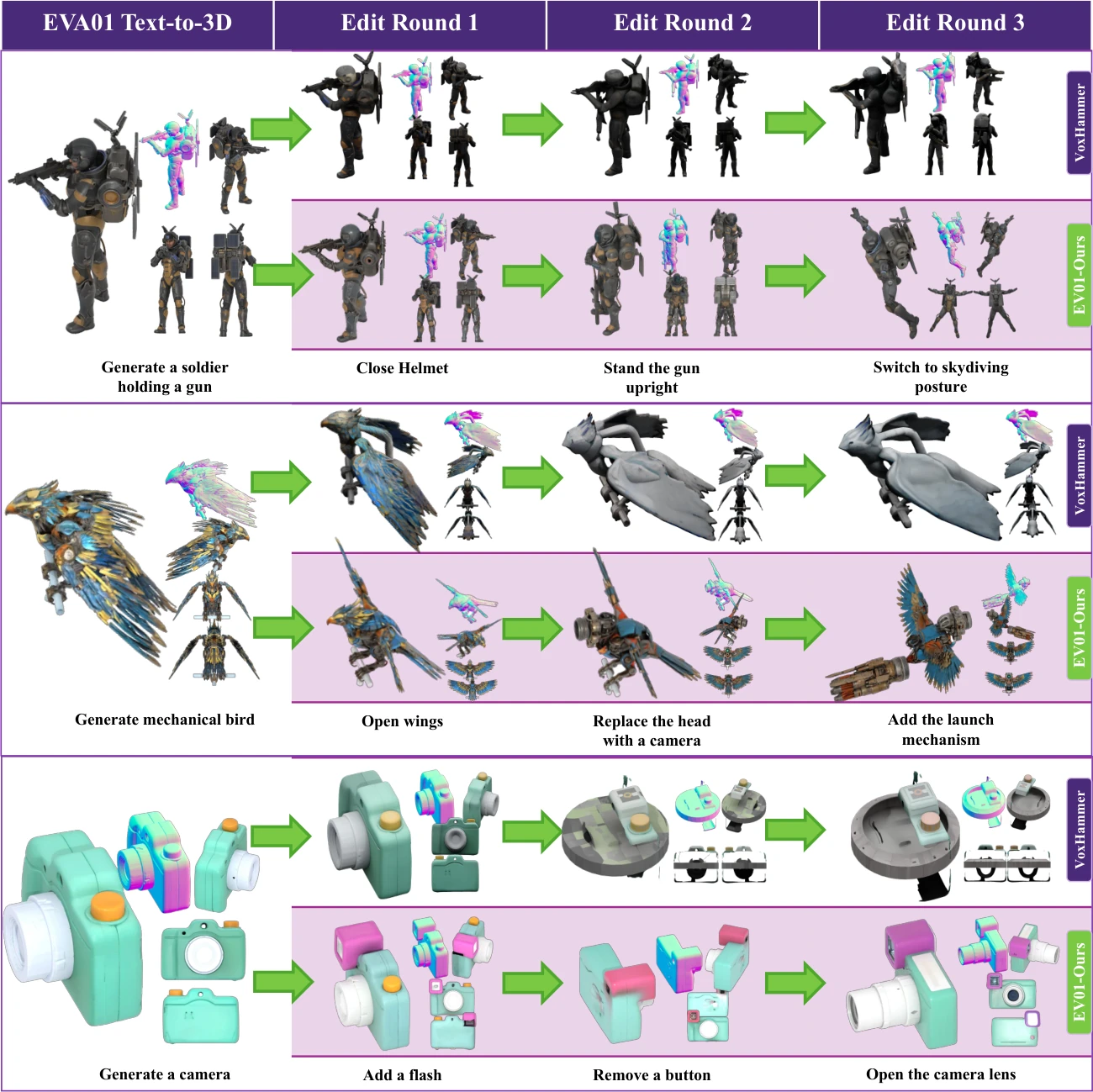

Instead of a one-shot reconstruction, EVA01 supports a continuous 3D workflow: generate an asset, ask about it, then edit it through the next instruction.

Every edit is conditioned on the full interaction context, enabling structural changes without explicit masks.

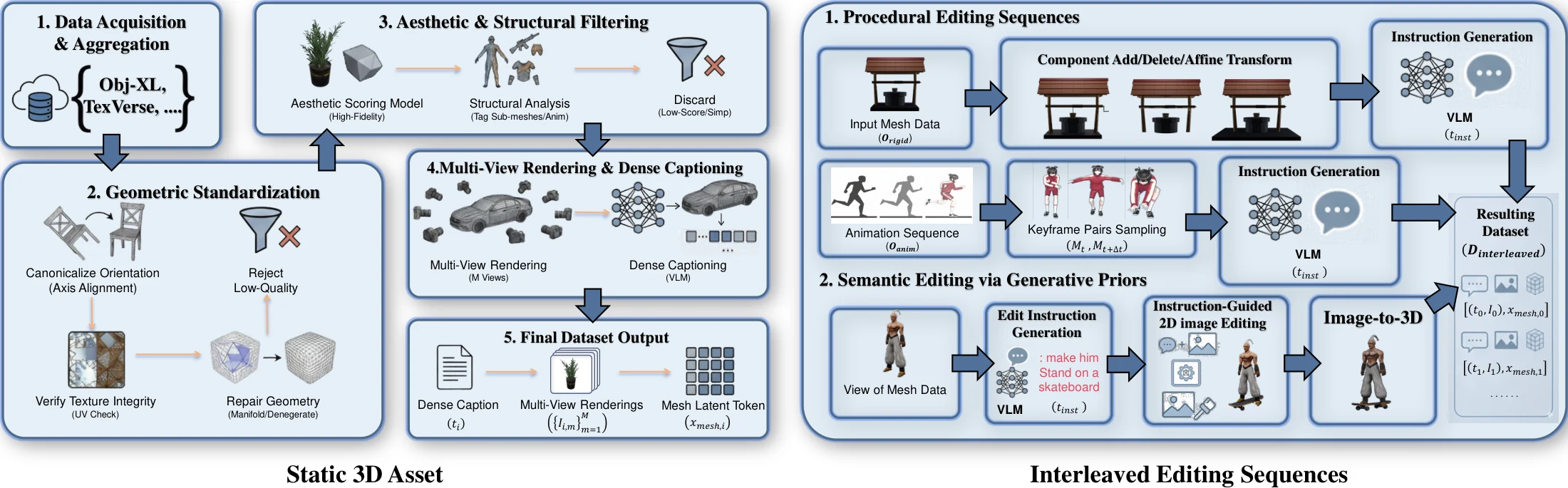

System Map

The Understanding Expert and Generation Expert are coupled through shared global self-attention, with hard modality routing separating semantic reasoning from geometric synthesis.

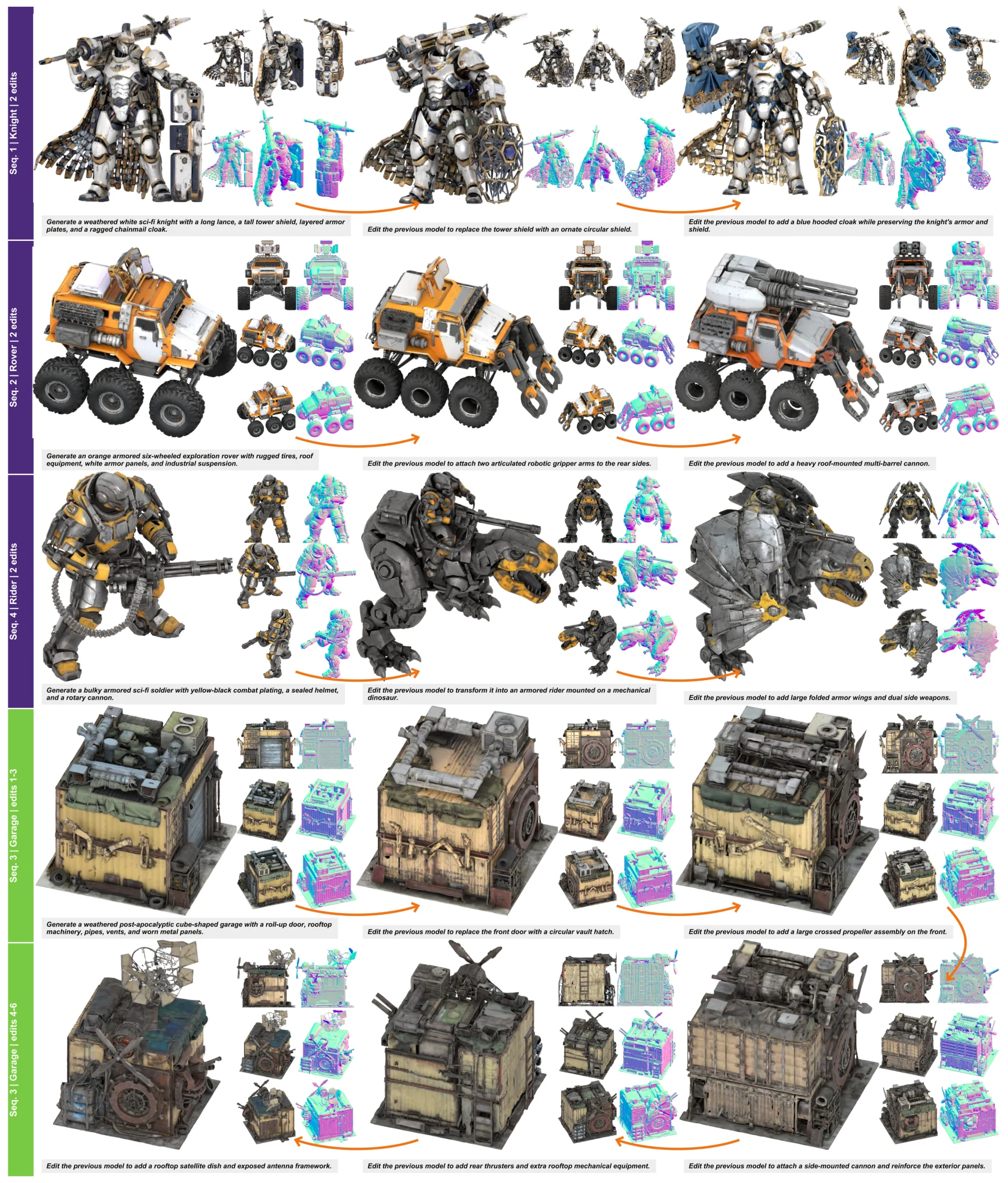

Gallery Cartridge

A compact look at EVA01's visual language: structured meshes, local edits, auxiliary views, and long-horizon identity preservation.

The page highlights mesh-level structure because EVA01's core claim is about native 3D reasoning and generation, not only rendered appearance.

Sequential instructions can add, replace, unfold, or attach parts while the model keeps the object identity in context.

EVA01 separates high-level multimodal reasoning from mesh-native generation, then reconnects them through shared context.

The same interaction loop can support concept exploration, structural refinement, and asset variation without restarting from zero.

Citation

Please cite EVA01 if you find the work useful.

@article{eva01seeleai2026,

title = {EVA01: Unified Native 3D Understanding and Generation via Mixture-of-Transformers},

author = {{SeeleAI Team}},

journal = {arXiv preprint, forthcoming},

year = {2026}

}